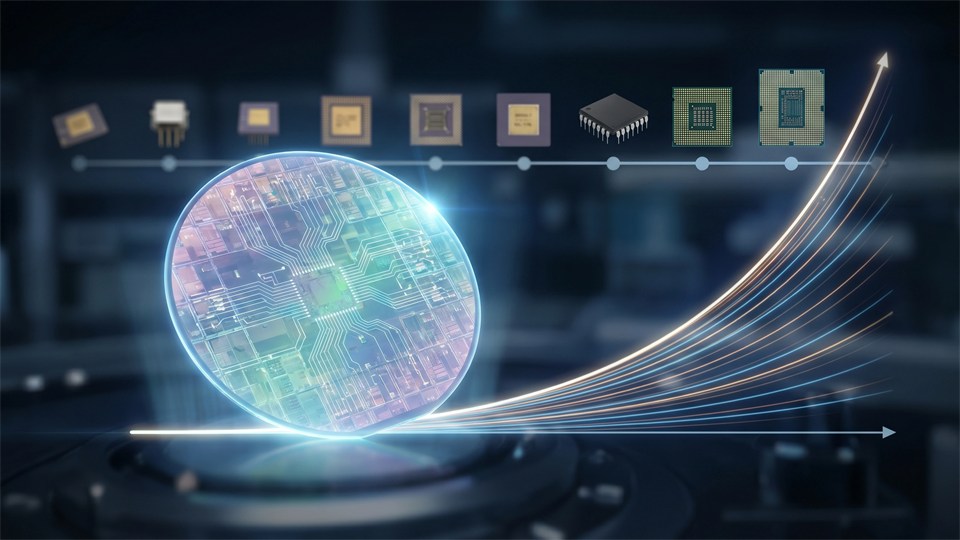

Moore's law is one of the best-known concepts in the history of technology. Although it is called a law, it is not a law of nature like gravity. Instead, it is an observation about how computer chips have developed over time. The idea was formulated in the 1960s and came to shape the entire electronics industry for decades. For beginners, Moore's law may seem like a technical topic, but in practice it is about something very concrete: why computers, phones, and many other digital products became faster, smaller, and cheaper over a long period.

When you understand Moore's law, you also understand an important part of the development of modern technology. It explains why an ordinary smartphone today can perform tasks that previously required large and expensive computers. At the same time, the concept helps explain why the chip industry is now facing new challenges. Moore's law is therefore both a historical landmark and a key to understanding today's technological limitations and possibilities.

Moore's law comes from Gordon Moore, who was a co-founder of Intel. In 1965, he noticed that the number of transistors on an integrated circuit tended to double at regular intervals. A transistor is a very small electronic component that functions as a kind of switch or amplifier in digital circuits. The more transistors that can be placed on a chip, the more computation the chip can typically perform. Moore's original observation pointed to a doubling about every year, but later it was often described as a doubling roughly every two years.

The important thing about Moore's law is not only the number itself, but the consequence of this development. If the number of transistors rises quickly and regularly, manufacturers can build more advanced processors without making them correspondingly larger. For many years, this meant that computers became more powerful while the cost per computation fell. As a result, technology became more accessible to both businesses and ordinary consumers. Over time, Moore's law became almost a roadmap for the entire industry, because many companies aligned their research, production, and expectations with it.

Before integrated circuits and modern chip manufacturing, electronics took up much more space. Computers could be as large as rooms or cabinets, and they required significant resources to build and operate. When it became possible to place more and more transistors on the same chip, it changed the entire relationship between size, cost, and performance. A smaller chip could perform more work than a much larger solution from an earlier generation. This made computers more practical, more energy-efficient, and easier to mass-produce.

For ordinary users, this meant, among other things, that personal computers became realistic. Later, laptops, mobile phones, digital cameras, game consoles, and smart devices became possible in the form we know today. If processing power had remained expensive and space-consuming, many of these products would either have been very limited or would not have existed at all. Moore's law was therefore not just a technical detail for engineers. It became a driving force behind digitalization in the home, in the workplace, in research, in communication, and in entertainment.

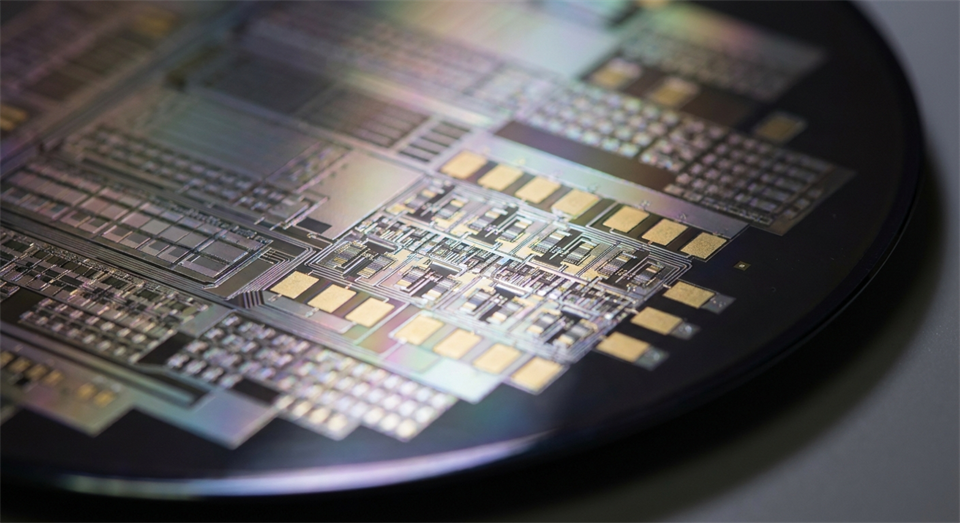

To understand Moore's law, it is useful to know the relationship between transistors and chips. A computer chip, often called a microchip or a processor, consists of enormous numbers of tiny transistors connected in complex patterns. These transistors can turn electrical signals on and off and thereby represent the binary values 0 and 1 on which digital electronics are built. When millions or billions of transistors work together, they can perform logical operations, store temporary data, and run advanced programs.

If you can make the transistors smaller, you can place more of them on the same surface. This creates more opportunities to increase a chip's functionality. A processor can, for example, gain more cores, larger cache, or more advanced control units. At the same time, signals can often travel shorter distances inside the chip, which can improve speed. In practice, miniaturization was therefore one of the most important reasons why computers became faster over time. Moore's law became a symbol of this miniaturization and the continuous improvement of integrated circuits.

For many years, you could almost feel Moore's law in everyday life. A new computer felt noticeably faster than the model from just a few years earlier. Programs that used to take a long time to open became more responsive. Graphics in games became more detailed. Image processing, video editing, and other demanding tasks gradually became possible on ordinary consumer machines. The same happened in the mobile world, where phones developed from simple communication devices into small computers with cameras, GPS, internet, and artificial intelligence.

Companies also used this development as a basis for planning. Software developers could count on future computers having more computing power and more memory. This made it possible to create more advanced programs and larger data systems. At the same time, the falling cost per transistor meant that electronics could be built into more types of products. As a result, computers found their way into cars, household appliances, medical equipment, industrial robots, and networking equipment. In this way, Moore's law helped spread digital technology far beyond the traditional computer.

The short answer is: both yes and no. For many years, developments roughly matched Moore's law, but over time it has become harder and more expensive to continue at the same pace. When transistors become extremely small, physical and technical problems arise. Materials behave differently at a very small scale, heat becomes a bigger problem, and production requires ever more advanced equipment. This means that it is no longer as easy to double the number of transistors at the same speed as before.

That is why many say that Moore's law is slowing down or changing character. However, this does not mean that progress has stopped. Chip manufacturers are still improving their products, but advances no longer come only from making transistors smaller. Instead, work is also being done on better architectures, more processor cores, specialized circuits, and smarter software. A modern chip can therefore become faster without necessarily following the classic version of Moore's law exactly. The concept is still useful, but it no longer describes the whole picture on its own.

One important reason is physics. When transistors become so small that their dimensions approach just a few nanometers, it becomes more difficult to control the electrons precisely. Small variations in materials and production can have a major impact on the result. In addition, heat generation becomes a central challenge. Even if you can pack more transistors onto a chip, it is not certain that you can get them all to work quickly without creating too much heat or using too much power.

Another reason is economics. Factories for modern chip manufacturing are among the most expensive industrial facilities in the world. The machines are extremely advanced, and the development of new production processes requires enormous investments. This means that only a few companies can afford to be truly at the forefront. Moore's law originally concerned technological development, but today economics is almost as important as physics. Even if something is possible in the laboratory, it is not certain that it can be produced cheaply enough for the mass market.

When miniaturization can no longer drive progress on its own, the industry looks for other paths. One method is to make specialized chips for specific tasks. Instead of one processor having to be best at everything, different types of circuits can be used for graphics, artificial intelligence, signal processing, or encryption. This is one of the reasons why modern computers and phones often contain several different processing units that work together. This specialization can provide major gains in performance and energy efficiency.

Another direction is so-called chiplet design, where a processor is built from several smaller parts instead of one large monolithic chip. This can make production more flexible and improve yield in the factory. In addition, the industry is working with 3D packaging, where components are stacked more closely together to save space and shorten connections. Software also plays a bigger role than before. Better compilers, smarter algorithms, and more efficient use of hardware can provide noticeable improvements, even when raw transistor growth slows down.

Although development has changed, Moore's law is still an important concept because it explains how the digital world reached its current level. Many of the technologies we take for granted today became possible because the chip industry was able for decades to deliver ever more computing power at lower cost. This applies to everything from the spread of the internet and data centers to smartphones, navigation, streaming, and modern medical equipment. Without this long period of rapid improvement, digital infrastructure would have looked completely different.

Moore's law is also relevant as a reminder that technological progress does not automatically continue at the same pace forever. Many people became used to assuming that the next generation of hardware would almost by itself solve problems of speed and capacity. Today, progress often requires more creativity, better design, and larger investments. Knowing Moore's law therefore provides both historical understanding and a more realistic view of the technology of the future.

Moore's law began as an observation about the number of transistors on chips, but it came to symbolize the entire explosive development of computer technology over several decades. It helped explain why electronics became smaller, cheaper, and more powerful, and why digital products spread to almost every part of society. For beginners, it is a useful key to understanding how modern technology became possible.

Today, the classic development is not as simple as before, because both physical and economic limits play a greater role. Nevertheless, the legacy of Moore's law lives on in the way the chip industry thinks about innovation. The progress of the future may come not only from smaller transistors, but also from new designs, specialized chips, and smarter software. That is why Moore's law is still worth knowing: not as an eternal guarantee, but as a central explanation of the technological world we live in.